The Data Silo Advantage: Why Your AI Agents Need Boundaries

- Aura

- Mar 26

- 7 min read

Updated: Mar 27

Your data is your business. Here's how nemo keeps it organized, protected, and working for you.

Is Your LLM Keeping Your Prompts and Reference Data Organized?

If you’ve been using a general-purpose LLM like ChatGPT, Claude, or Copilot for a while, you may be noticing some overlap. When you ask the LLM to reference a specific document, its response includes unrelated information.

Perhaps you've also seen that there is no easy, default way to avoid data cross-pollination for projects (or folders) within your account.

LLMs Share Context by Default

You and your team likely have projects for different clients or use cases organized in folders; they "look" like separate and distinct projects, but aren't. Your folders are merely a visual reference, an archive of old prompts and responses. You can certainly try requesting a specific reference document, but results are unreliable.

When using a ChatGPT, Claude or Copilot account, items like your sales playbook, customer support scripts, internal HR policies, or client briefs aren't truly separated. So when you prompt the AI, the answer can come from a mix of sources.

Picture a library. This is what LLM context is. All the information can be organized in categories and volumes, but all the volumes are accessible from anywhere.

This general lack of boundaries can be acceptable for personal productivity. With business use, it's messy, causing:

Customer support accessing sales reports

A client facing chatbot referencing internal documents

Client A's confidential data showing up in Client B's conversation

At nemo, privacy was baked in from the beginning. One of the first use cases for nemo was to serve a client in a high-stakes industry where errors caused by incorrect context could lead to very serious consequences to people.

But even if your industry isn’t life or death, you know how important it is to clearly separate which information is used for which purpose.

nemo Makes Sure Your Data and Context Don’t Mix (Unless You Want It To)

Our approach to separating business data can be compared to private offices in a building, not books in a public library. Each agent you build gets its own space, its own knowledge, and its own boundaries. And you're the one who decides where the walls go.

Read this article to learn how we separate your data with nemo conversational agents, how that benefits your business, and how to handle a growing fleet of agents.

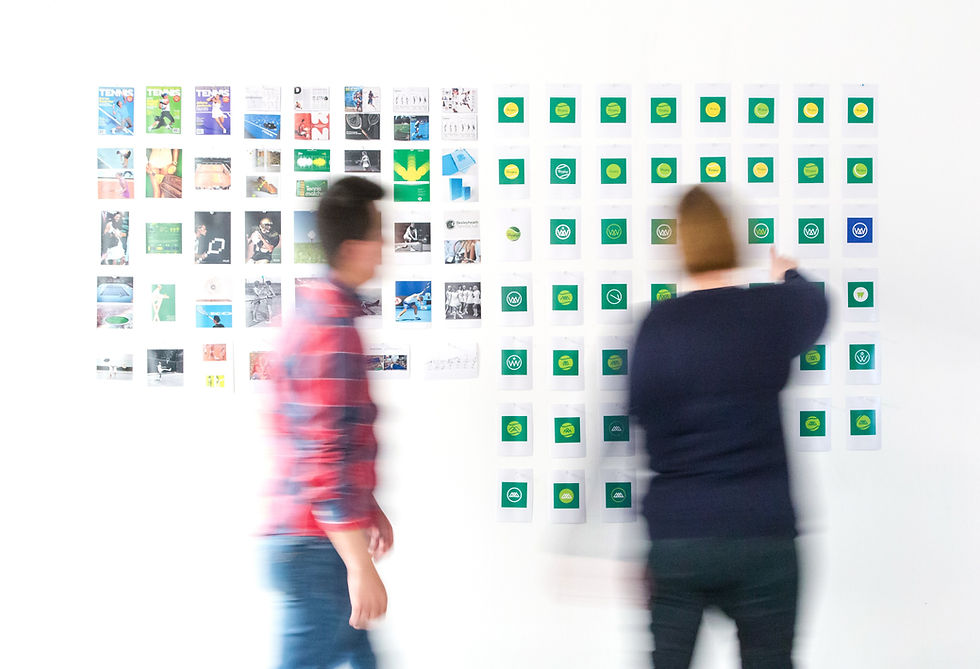

A Workshop, Not a Library

Picture the nemo platform as a building. Every agent you create is a standalone office inside that building. Each office has its own filing cabinet (the knowledge base), its own job description (system instructions), and its own personality (voice and tone).

Even though all your agents live on the same platform, they operate in completely separate memory banks. In technical terms, each agent has its own vector database, which is a dedicated space where its training materials are stored and retrieved.

In practical terms, it means your sales agent can't peek into your HR agent's files, and your customer FAQ agent has no idea what your internal onboarding agent knows.

You don’t need a permissions layer on top of a shared database. The separation is built into the architecture itself.

Why is that important? Because permissions can be misconfigured. A shared database with access rules is only as secure as the person who set up those rules. When each agent has its own dedicated knowledge base from the ground up, there's nothing to misconfigure. The boundaries are structural, not administrative.

Three Reasons Data Separation Is Essential for Business

1. Brand Integrity Across Every Conversation

Every agent you build with nemo has its own personality, tone, and purpose. A customer support agent can behave in a warm, patient, and empathetic manner. A sales qualification agent might be direct, persuasive, and action-oriented. Your internal knowledge agent can be thorough and precise.

Because each agent is trained on its own materials with its own instructions, there's no risk of tone bleed. Your support agent won't suddenly start pitching products, and your sales agent won't start troubleshooting technical issues.

This is important. Brand consistency is hard enough to maintain across human teams.

When you add AI into the mix, you need confidence that each conversation stays on-brand and on-task. Separate training for separate roles is how you get that confidence.

2. Security and Client Trust

Data silos are non-negotiable when client trust is at stake.

If your business serves multiple clients, or handles sensitive internal data alongside public-facing content, airtight separation between data sets is a must. A support agent trained on Client A's documentation cannot have access to Client B's files. The end.

With nemo, that separation is the default. Each agent's knowledge base is its own isolated container. Client A's confidential documents are physically inaccessible to Client B's agent; they exist in entirely different spaces.

For regulated industries or companies that handle proprietary information, this is a meaningful layer of protection. And for your clients, it's a trust signal: their data is handled with care, not tossed into a shared AI bucket.

3. Operational Control (You Define the Boundaries)

Here's something that trips up a lot of teams using general-purpose LLMs: the AI knows too much and too little at the same time.

It might pull in information from its general training data that has nothing to do with your business. Or it might mix up context from different conversations and produce something that sounds confident but is completely wrong; this is the classic "hallucination" problem.

With nemo, an agent only knows what you tell it to know. You upload the documents, you write the instructions, you define the scope. If it's not in the agent's training materials, the agent won't reference it.

That constraint is actually a feature. It gives you predictability. When your FAQ agent answers a customer question, you can trace that answer back to a specific document you provided. When your lead qualification agent asks a prospect a follow-up question, it's following a logic you defined.

Instead of a mysterious black box, you get a focused agent who cannot deviate from your instructions.

Managing Your Fleet: Independence with Flexibility

As your team builds more agents, a natural question comes up: how do I keep all of this organized?

The good news is that the same separation that protects your data also keeps things tidy.

Each Agent Is Independent

Every agent gets its own:

Purpose and personality — what it's built to do

AI model — the best "brain" for that particular job

Knowledge base — the documents and data it learns from

System instructions — the rules that govern its behavior

Deployment channel — where it lives (your website, WhatsApp, Slack, an internal portal)

You manage each one individually. If you want to update what your support agent knows, you swap a document in its knowledge base. That change doesn't touch your sales agent, your onboarding agent, or anything else. No risk of unintended side effects.

Freedom to Repurpose, As Needed

While agents are separate, your content doesn't have to be siloed forever.

Say you've created a brand style guide that you want multiple agents to follow. You can upload that same document to several agents' knowledge bases. Each agent still operates in its own space, but they can reference common materials when it makes sense.

Think of it like giving multiple employees the same company handbook. They each have their own copy on their own desk. They read the same guidelines, but they still do completely different jobs.

This gives you the best of both worlds: consistency where you want it, separation where you need it.

How This Compares to the Way Most Companies Use AI Today

Most businesses today use AI in one of two ways:

Option A: Everyone on the team shares access to the same LLM (ChatGPT, Claude, Copilot). Each person prompts it individually. There's no structured training, no data separation, and no consistent output across the team.

Option B: The company invests in an enterprise AI platform that promises everything, after significant engineering resources are spent to configure, maintain, and manage data access controls.

nemo sits in a fundamentally different space.

We don't ask you to manage complex permission structures or hire engineers to maintain your AI setup. We also don't leave you with a shared tool that everyone uses differently with unpredictable results.

Instead, we give you a platform where separation is the starting point. You build focused agents for specific jobs, each with its own knowledge and boundaries, and you manage them through a visual interface that any team member can understand.

Want to change what an agent knows? Swap a document. Want to adjust its tone? Update the instructions. Want to audit what it's been trained on? Open its knowledge base and look.

It’s clear, auditable, business-ready AI.

You're the Architect

This is another way we built nemo to keep people in charge.

When you build agents with nemo, you're deciding what each one sees, says, and knows. You're setting the boundaries that keep your data organized, your brand consistent, and your clients' information protected.

nemo simply requires clarity about what you want each agent to accomplish; in exchange you get a platform with clear, simple boundaries.

Other platforms treat data segregation as a complex technical feature you configure after the fact. At nemo it’s built in.

So as you think about your current AI setup, a few questions worth considering:

Do you know exactly what data your AI tools have access to right now?

Could one team's information accidentally surface in another team's output?

If a client asked you how their data is protected within your AI systems, would you have a clear answer?

If those questions give you pause, we'd love to talk. Reach out to our team and let's walk through how nemo's approach to data separation could work for your specific situation.

Comments